Lightning in 15 minutes¶

Required background: None

Goal: In this guide, we’ll walk you through the 7 key steps of a typical Lightning workflow.

PyTorch Lightning is the deep learning framework with “batteries included” for professional AI researchers and machine learning engineers who need maximal flexibility while super-charging performance at scale.

Lightning organizes PyTorch code to remove boilerplate and unlock scalability.

By organizing PyTorch code, lightning enables:

Full flexibility

Try any ideas using raw PyTorch without the boilerplate.

Reproducible + Readable

Decoupled research and engineering code enable reproducibility and better readability.

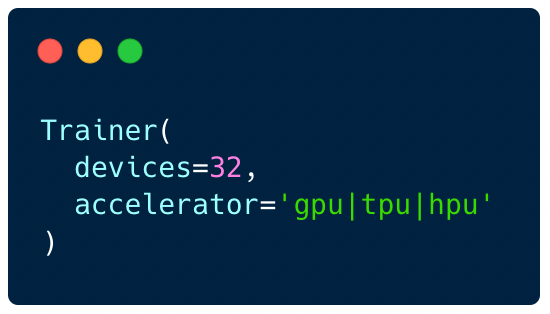

Simple multi-GPU training

Use multiple GPUs/TPUs/HPUs etc... without code changes.

Built-in testing

We've done all the testing so you don't have to.

1: Install PyTorch Lightning¶

Or read the advanced install guide

2: Define a LightningModule¶

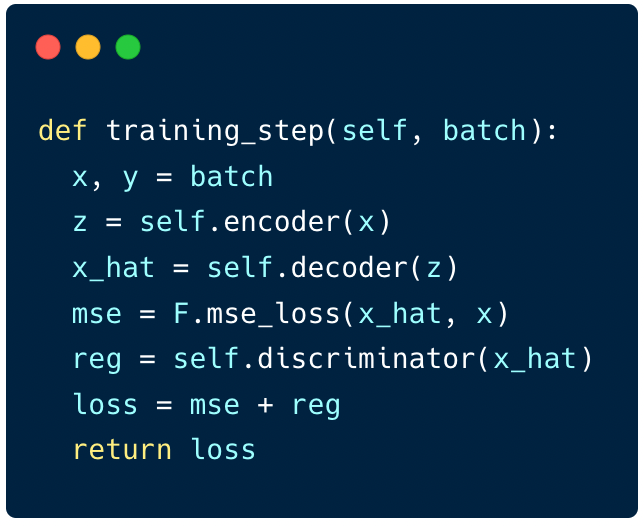

A LightningModule enables your PyTorch nn.Module to play together in complex ways inside the training_step (there is also an optional validation_step and test_step).

import os

from torch import optim, nn, utils, Tensor

from torchvision.datasets import MNIST

from torchvision.transforms import ToTensor

import lightning as L

# define any number of nn.Modules (or use your current ones)

encoder = nn.Sequential(nn.Linear(28 * 28, 64), nn.ReLU(), nn.Linear(64, 3))

decoder = nn.Sequential(nn.Linear(3, 64), nn.ReLU(), nn.Linear(64, 28 * 28))

# define the LightningModule

class LitAutoEncoder(L.LightningModule):

def __init__(self, encoder, decoder):

super().__init__()

self.encoder = encoder

self.decoder = decoder

def training_step(self, batch, batch_idx):

# training_step defines the train loop.

# it is independent of forward

x, y = batch

x = x.view(x.size(0), -1)

z = self.encoder(x)

x_hat = self.decoder(z)

loss = nn.functional.mse_loss(x_hat, x)

# Logging to TensorBoard (if installed) by default

self.log("train_loss", loss)

return loss

def configure_optimizers(self):

optimizer = optim.Adam(self.parameters(), lr=1e-3)

return optimizer

# init the autoencoder

autoencoder = LitAutoEncoder(encoder, decoder)

3: Define a dataset¶

Lightning supports ANY iterable (DataLoader, numpy, etc…) for the train/val/test/predict splits.

# setup data

dataset = MNIST(os.getcwd(), download=True, transform=ToTensor())

train_loader = utils.data.DataLoader(dataset)

4: Train the model¶

The Lightning Trainer “mixes” any LightningModule with any dataset and abstracts away all the engineering complexity needed for scale.

# train the model (hint: here are some helpful Trainer arguments for rapid idea iteration)

trainer = L.Trainer(limit_train_batches=100, max_epochs=1)

trainer.fit(model=autoencoder, train_dataloaders=train_loader)

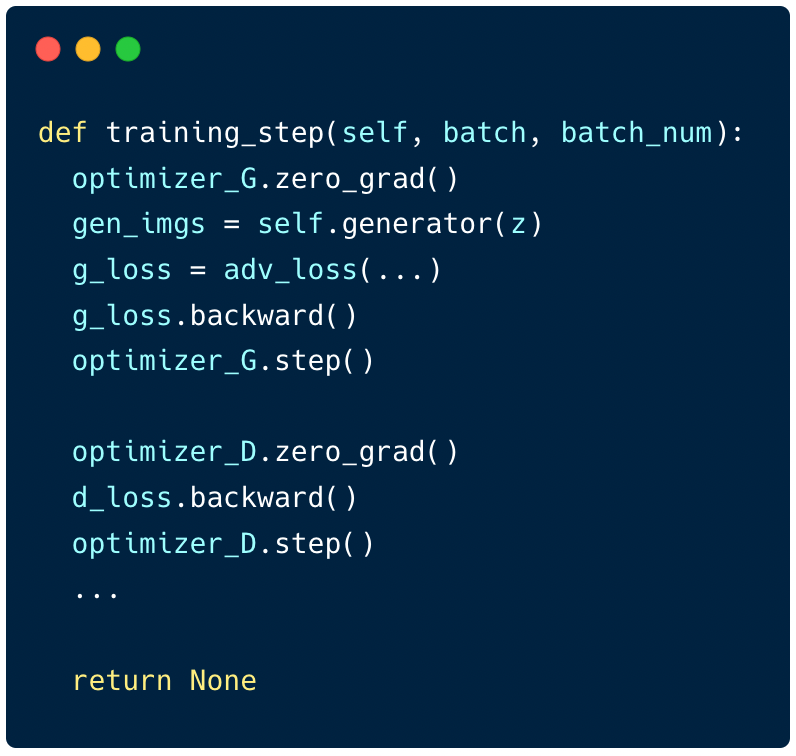

The Lightning Trainer automates 40+ tricks including:

Epoch and batch iteration

optimizer.step(),loss.backward(),optimizer.zero_grad()callsCalling of

model.eval(), enabling/disabling grads during evaluationTensorboard (see loggers options)

Multi-GPU support

16-bit precision AMP support

5: Use the model¶

Once you’ve trained the model you can export to onnx, torchscript and put it into production or simply load the weights and run predictions.

# load checkpoint

checkpoint = "./lightning_logs/version_0/checkpoints/epoch=0-step=100.ckpt"

autoencoder = LitAutoEncoder.load_from_checkpoint(checkpoint, encoder=encoder, decoder=decoder)

# choose your trained nn.Module

encoder = autoencoder.encoder

encoder.eval()

# embed 4 fake images!

fake_image_batch = torch.rand(4, 28 * 28, device=autoencoder.device)

embeddings = encoder(fake_image_batch)

print("⚡" * 20, "\nPredictions (4 image embeddings):\n", embeddings, "\n", "⚡" * 20)

6: Visualize training¶

If you have tensorboard installed, you can use it for visualizing experiments.

Run this on your commandline and open your browser to http://localhost:6006/

tensorboard --logdir .

7: Supercharge training¶

Enable advanced training features using Trainer arguments. These are state-of-the-art techniques that are automatically integrated into your training loop without changes to your code.

# train on 4 GPUs

trainer = L.Trainer(

devices=4,

accelerator="gpu",

)

# train 1TB+ parameter models with Deepspeed/fsdp

trainer = L.Trainer(

devices=4,

accelerator="gpu",

strategy="deepspeed_stage_2",

precision=16

)

# 20+ helpful flags for rapid idea iteration

trainer = L.Trainer(

max_epochs=10,

min_epochs=5,

overfit_batches=1

)

# access the latest state of the art techniques

trainer = L.Trainer(callbacks=[StochasticWeightAveraging(...)])

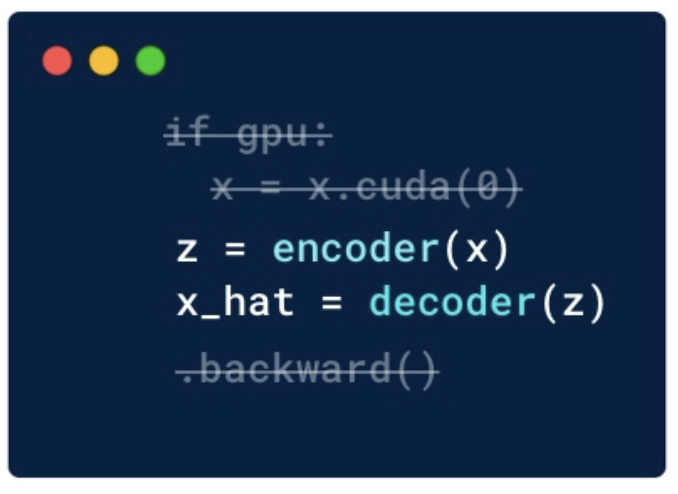

Maximize flexibility¶

Lightning’s core guiding principle is to always provide maximal flexibility without ever hiding any of the PyTorch.

Lightning offers 5 added degrees of flexibility depending on your project’s complexity.

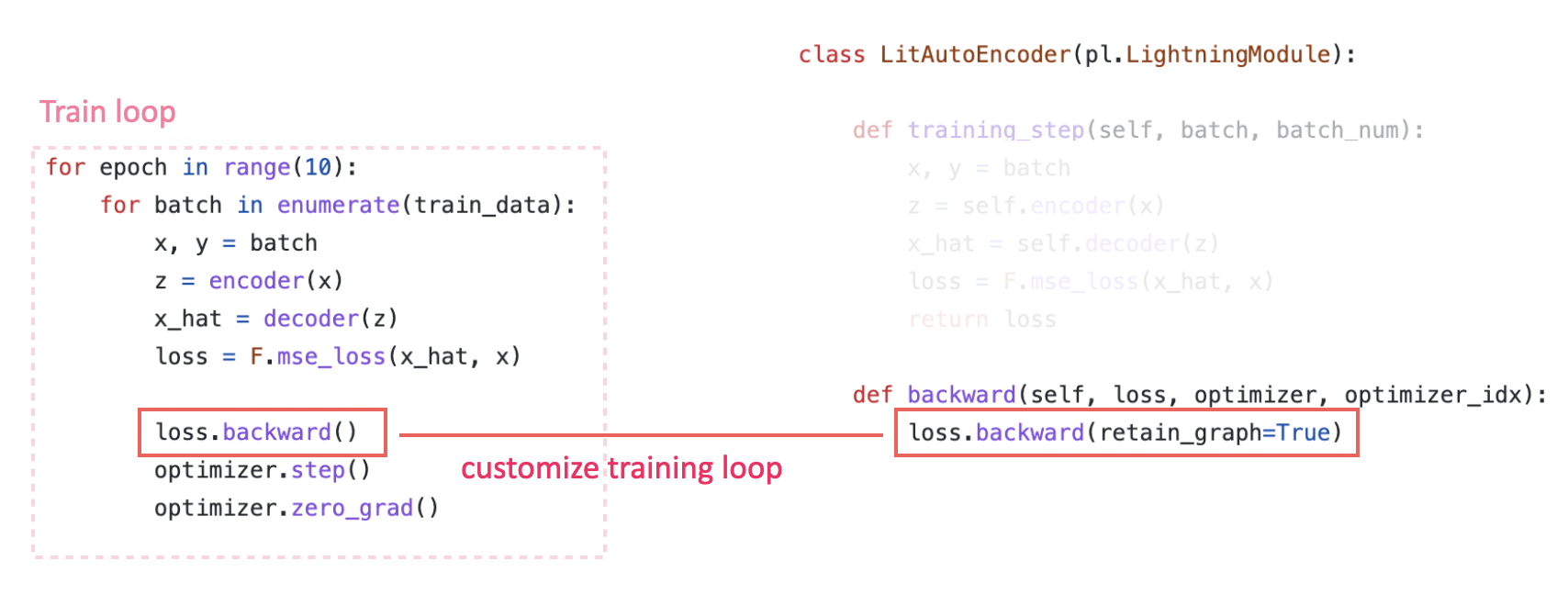

Customize training loop¶

Inject custom code anywhere in the Training loop using any of the 20+ methods (Hooks) available in the LightningModule.

class LitAutoEncoder(L.LightningModule):

def backward(self, loss):

loss.backward()

Extend the Trainer¶

If you have multiple lines of code with similar functionalities, you can use callbacks to easily group them together and toggle all of those lines on or off at the same time.

trainer = Trainer(callbacks=[AWSCheckpoints()])

Use a raw PyTorch loop¶

For certain types of work at the bleeding-edge of research, Lightning offers experts full control of optimization or the training loop in various ways.

Next steps¶

Depending on your use case, you might want to check one of these out next.